WARNING: unbalanced footnote start tag short code found.

If this warning is irrelevant, please disable the syntax validation feature in the dashboard under General settings > Footnote start and end short codes > Check for balanced shortcodes.

Unbalanced start tag short code found before:

“Gail put up the quotation “solvitur ambulando”, citing St. Augustine, and noting that it is Latin for “solved by walking”. But when I just googled it, it turns out that it was actually from the philosopher Diogenes, and actually refers to something that is solved by a practi…”

This year’s conference was in Halifax and, as always, it was a wonderful opportunity to reconnect with my evaluation friends, make some wonderful new friends, to pause and reflect on my practice, and to learn a thing or two. And I think this is quite possibly the fastest I’ve ever put together my post-conference recap here on ye old blog! (The conference ended on May 29 and I’m posting this on June 14!)

Student Case Competition

The highlight of the conference for me this year was the Student Case Competition finals. In this competition, student teams from around the country, each coached by an experienced evaluator, compete in round 1 where they have 5 hours to review a case (typically a nonprofit organization or program) and then complete an evaluation plan for that program. Judges review all the submissions and the top 3 teams from round 1 move on to the finals, where they get to compete live at the conference. They are given a different case and have 5 hours to come up with a plan, which they then present to an audience of conference goers, including representatives from the organization and three judges. After all three teams present, the judges deliberate and a winning team is announced!

I had the honour of coaching a team of amazing students from Simon Fraser University. The competition rules do not allow teams to talk to their coaches when they are actually working on the cases, so my role was to work with them before the round, talking about strategies for approaching the work, as well as chatting with them about evaluation in general. Most of the students on the team had not yet taken an evaluation course, so I also provided some resources that I use when I teach evaluation.

I will admit that I was a bit nervous watching the presentations – not because I didn’t think my team would do well, as I know they worked really hard and are all exceptionally intelligent, enthusiastic and passionate, but because it’s huge challenge to come up with a solid evaluation plan and a presentation in such a short period of time, and because they were competing among the best in the country!

But I need not have been worried. They came up with such a well thought through, appropriate to the organization, and professional plan and presented it with all the enthusiasm, professionalism, grace, and passion that I have come to know they possess. I was definitely one proud evaluation mama watching my team do that presentation and so very, very proud of them when they won! Congratulations to Kathy, Damien, Stephanie, Manal, and Cassandra! And to Dasha, who was part of the team that won round 1, but wasn’t able to join us in Halifax for the finals.

Kudos also go to the two other teams who competed in the finals – students from École nationale d’administration publique (ENAP) and Memorial University of Newfoundland (MUN). Great competitors and, as I had the pleasure of learning when we all went out to the pub afterwards, as well as chatting at the kitchen party the next night, all very lovely people!

Conference Learnings

As usual, I took a tonne of notes throughout the conference and, as usual for my post-conference recaps, I will:

- summarize some of my insights, by topic (in alphabetical order) rather than by session as I went to some different sessions that covered similar things

- where possible, include the names of people who said the brilliant things that I took note of, because I think it is important to give credit where credit is due. Sometimes I missed names (e.g., if an audience member asked a question or made a statement, as audience members don’t always state their name or I don’t catch it)

- apologize in advance if my paraphrasing of what people said is not as elegant as the way that people actually said them.

Anything in [square brackets] is my thoughts that I’ve added upon reflection on what the presenter was talking about.

Federal Government

- every time I go to CES, I find I learn a little bit more about how the federal government works (since so many evaluators work there!). This time I learned that Canada Revenue Agency (CRA) doesn’t report up to Treasury Board – they report to Finance

Indigenous Evaluation

- the conference was held on Mi’kma’ki, the ancestral and unceded territory of the Mi’kmaq People.

- the indigenous welcome to the conference was fantastic and it was given by a man named Jude. I didn’t catch his full name and I couldn’t find his name in the conference program or on Twitter. [Note to self: I need to do better at catching and remembering names so I can properly give credit where credit is due]. He talked about how racism, sexism, ableism, transphobia, and other forms of oppression are at play in the world today. He also talk about about how there is a difference between guilt and responsibility. We need to take responsibility for making things better now, not just feel guilty about the way things are.

- Nan Wehipeihana talked about an evaluation of sports participation program and how they moved from sports participation “by” Māori to sports participation “as” Māori. They talked about what it would look like to participate “as” Māori (e.g., using

Māori language, Māori structures (tribal, subtribal, kin groups) are embedded in the activity, activities occur in places that are meaningful to Māori people (e.g., kayaking on our rivers, activities on our mountains). Developed a rubric in the shape of a five-point star (took a year to develop). - I went to a Lightning Roundtable session hosted by Larry Bremner, Nicole Bowmanm, and Andrealisa Belizer where they were leading a discussion on Connecting to Reconciliation through our Profession and Practice. One of the things that Larry mentioned that struck me was the importance of not just indigenous approaches to evaluation, but indigenous approaches to program development. It doesn’t make sense to design a program without indigenous communities as equal partners and then to say you are going to take an indigenous approach to evaluation – the horse has left the barn by that point.

- They also talked about how evaluators are culpable for the harm that is still happening because we haven’t done right in our work. They talked about how the CES needs to keep the government’s feet to the fire on the Truth and Reconciliation Commission’s (TRC) Calls to Action. Really, after there Commission, there should have been a TRC implementation committee who could go around the country and help get the Calls to Action implemented (Larry Bremner).

- They talked about not only what can CES do at the national level, but what can we do at the chapter level. As the president of one of the chapters, this is something I need to reflect on and speak to the council about. I also need to revisit the Truth and Reconciliation Commission’s Calls to Action (as it was a while ago that I read that report) and read “Reclaiming Power and Place: The Final Report of the National Inquiry into Missing and Murdered Indigenous Women and Girls“, which was released the week after the CES national conference.

- I also went to a concurrent session where the panelists were discussing the TRC Calls to action. They pointed out that CBC has a website where they are tracking progress on the 94 Calls to Action: Beyond 94.

- CES added a competency about indigenous evaluation in its recent updating of the CES competencies:

- 3.7 Uses evaluation processes and practices that support reconciliation and build stronger relationships among Indigenous and non-Indigenous peoples.

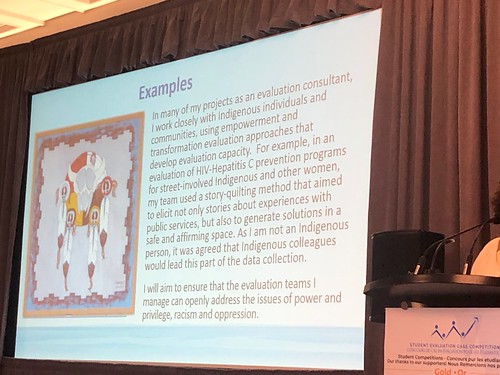

- Many evaluators saw this new competency and said “I don’t work with indigenous populations, so how can I relate to this competency?” [I will admit, I had that thought as well when the new competencies were announced. Not that I don’t think this is an important competency for evaluators to have – but more that I didn’t know how to apply it in the work I am currently doing or where to start in figuring out what I should do.]. The CES is trying to provide examples to support evaluators. (Linda Lee) E.g.:

- I also learned that EvalIndigenous is open to indigenous and non-indigenous people – anyone who wants to move forward indigenous worldviews and want indigenous communities to have control of their own evaluations. So I joined their Facebook group! (Nicole Bowman and Larry Bremner)

- Evaluators typically use a Western European approach and many use an “extractive” evaluation process, where they take stuff out of the community and leave (I can’t remember if this slide was from Larry Bremner or Linda Lee).

- I also found this discussion of indigenous self-identification helpful (Larry Bremner):

- There is still so much work to do and so much harm being inflicted on indigenous people:

- there are more indigenous kids in care today than were in residential schools – this is the new residential schools. (Larry Bremner)

- During the discussion with the audience, some audience members mentioned “trauma tourism” – that it can be re-traumatizing for indigenous people to share traumas they have experienced and non-indigenous people, in their attempts to learn more about the experiences of indigenous people need to be mindful of this and not further burden indigenous people.

- If you google “indigenous women”, all the results you get are about missing and murdered indigenous women and girls. Where is the focus on the strengths in the community?

Learning

- evaluators are learners (Barrington)

- Bloom’s Taxonomy is a hierarchy of cognitive processes that we go through when we do an evaluation – notice that evaluation is at the top – it’s the hardest part

(Gail Barrington)

By Xristina la – Own work, CC BY-SA 3.0, Link

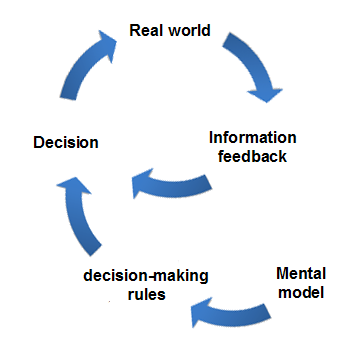

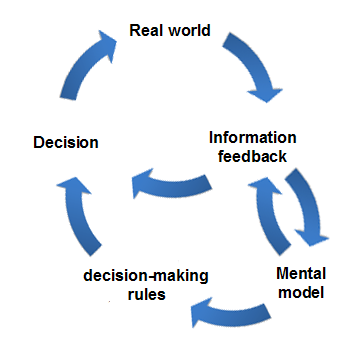

- single loop learning is where you repeat the same process over and over again, without every questioning the problem you are trying to fix (sort of like the PDSA cycle). There’s no room for growth or transformation. (Gail Barrington)

By Xjent03 – Own work, CC BY-SA 3.0, Link

- in contrast, double loop learning allows you to question if you are really tackling the correct problem (sometimes the way that the problem is defined is causing problems/making things difficult to solve) and the decision making rules you are using, allowing for innovation/transformation/growth. (Gail Barrington)

By Xjent03 – Own work, CC BY-SA 3.0, Link

Pattern Matching

- “Pattern matching is the underlying logic of theory-based evaluation” – specify a theory, collected data based on that, see if they match (Sebastian Lemire)

- Trochim wrote about both verification AND falsification, but in practice most people just come up with a theory and try to find evidence to support it (confirmation bias)

(Sebastian Lemire) - humans are wired to see patterns, even when they aren’t there and we tend to focus on evidence in support of the patterns (Sebastian Lemire)

- having more data is not the solution! (Sebastian Lemire)

- e.g., when people were given more information on horses and then made bet, they didn’t get any more accurate in their bets, but they did get more confident in their bets

- evaluators need to do reflective practice – e.g., to look for our biases (Sebastian Lemire)

- structural analytic techniques (see slide) below – not a recipe, but a structure process (Sebastian Lemire)

- pay attention to alternative explanations – in the context of comissioned evaluations, it can be hard to get commissioners to agree to you spending time on looking at alternative explanations and we often go into an evaluation assuming that the program is the cause (bias) (Sebastian Lemire)

- falsification: specify what data you would expect to see if your hypothesis was wrong

(Sebastian Lemire)

Power and Privilege

- since we have under-served, under-represented, and under-privileged people, we must also have over-served, over-represented, and over-privileged people (Jude, who gave the indigenous welcome. I didn’t catch his last name and I can’t find it on the conference website)

- recognize your power and privilege, recognize your biases and think about where they come from and work to prevent your biases from affecting your work

(Jude, who gave the indigenous welcome. I didn’t catch his last name and I can’t find it on the conference website) - and speaking of power and privilege, the opening plenary on the Tuesday morning was a manel. For the uninitiated, a “manel” is a panel of speakers who are all male. It’s an example of bias – men being more often recognized as experts and given a platform as experts when there are many, many qualified women. I called it out on Twitter:

- a friend of mine who is a male re-tweeted this saying he was glad to see that someone called it out and when I spoke to him later, he told me that people were giving him kudos for calling it out and he had to point out that it was actually a woman who called it out. So another great example of women being made invisible and men getting credit.

- I do regret, however, that I neglected to point out that it was a “white manel” specifically. There’s so much more to diversity than just “men” and “women”!

Realist Evaluation

- Michelle Naimi (who I know from the BC evaluation scene) gave a great presentation on a realist evaluation project she’s been working on related to violence prevention training in emergency departments. My notes on realist evaluation don’t do it justice, but I think my main learning here is that this is an approach that I can learn more about. I’m definitely inviting her as a guest speaker the next time I teach evaluation!

Reflective Practice

- I took a pre-conference workshop, led by Gail Barrington, on reflective practice. This is an area that I’ve identified that I want to improve in my own work and life, and a pre-conference workshop where I got to learn some techniques and actually try them out seemed like a perfect opportunity for professional development.

- Gail talked about:

- how she doesn’t see her work and her self as separate – they are seamless

- if you don’t record your thoughts, they don’t endure. (How many great ideas have you had and lost?) [I’d add, how many great ideas have you had, forgotten about, and then been reminded of later when you read something you wrote?]

- evaluators are always serving others – we need to take care of ourselves too

- The best part of the workshop was that we got to try out some techniques for reflective practice as we learned them

| Warm up activity: In this activity, we took a few minutes to answer the following questions: -Who am I? -What do I hope to get out of this workshop? -To get the most out of this workshop, I need to ____ Then we re-read what we wrote and answered this: -As I read this, I am aware that __________ |

- and that is an example of reflection!

- [Just had an idea! I could use that at the start of class to introduce the notion of reflective practice from the beginning of class. If I turn my class into more of a flipped classroom approach, I could have more in-class time to do fun, experiential things like this than listening to lecture 🙂 ]

| Resistance Exercise: Another quick writing exercise: -What are the personal barriers that hold me back from reflection? -What are the lifestyle/family barriers that hold me back from reflection? -What barriers at work are holding me back from being transformative? Then we re-read what you wrote and answer this: -As I read this, I am aware that __________ |

| The Morning Pages: Write three pages of stream of consciousness first thing in the morning in a journal that you like writing in. Before you’ve done anything else – and before your inner critic has woken up. If you can’t think of anything to write, just write “I can’t think of anything to write” over and over again until something comes to you. All sorts of things will pop up – might be ideas for a project you are working on, or “to do” items to add to your list. You can annotate in margins, transfer things to your main to do list later, or some of it might not be useful to you now and you don’t have to look at it again. |

- Gail said it’s very different writing first ting in the morning compared to later in the day. I know that I’m unlikely to get up an extra half hour earlier than I already do, but I could give this a try on weekend morning when I’m not feeling rushed to get to work to see if it’s different for me too.

| Start Now Activity: -The thoughts/ideas that prevent me from journaling now ____ Then re-read what you wrote and answered this: -As I read this, I am aware that __________ |

- for some people, writing is not for them. An alternative is using a voice memo app. We gave it a try in the workshop and I was kind of meh on it, but I used it two more times during the conference when I had a quick thought I wanted to capture. I think the challenge will be that if I want to retrieve those ideas, I’ll need to listen to the recordings, which seems like a big time sync, depending on how much I say (as I can be verbose).

- we also talked about meditation and went out on a meditative walk ((Gail put up the quotation “solvitur ambulando”, citing St. Augustine, and noting that it is Latin for “solved by walking”. But when I just googled it, it turns out that it was actually from the philosopher Diogenes, and actually refers to something that is solved by a practical experiment). For our walk, we set an intention (to think about one thing that I’ll chnage at my work), then forget about it and go for a mindful walk – paying attention to the sensations of walking (e.g., the feeling of your feet on the ground as you step, the colours and shapes and sounds and smells you encounter). It was a rainy day, but I was definitely struck with all the beauty around me, and was reminded about how beneficial mindfulness can be.

- My take home from all my reflections in this workshop was:

- taking time to do things like reflective practice and mindfulness meditation is a choice. I say that I don’t have enough time to do these things, but it’s actually that I have been choosing not to spend my time doing these things. There are a variety of reasons for those choices (which I did reflect on and got some valuable insights about). Remembering that this is a choice – and being more mindful of what choices I’m making – is going to be my intention as I return back to work after my conference/holiday.

Rubrics

- I’ve been to sessions on Rubrics by Kate McKegg, Nan Wehipeihana, and their colleagues at a number of conferences and I always learn useful things. This year was no exception. The stuff in this section is all from McKegg and Wehipeihana (and they had a couple of collaborators who weren’t there but “presented” via video.

- rubrics are a way to make our evaluation reason explicit

- just evaluating on if goals are met is not enough. Rubrics can help us with situations like:

- what counts as “meeting targets”? (e.g., what if you meet an unimportant target but don’t meet an important one? Or you way exceed one target and miss another by a little bit? etc.)

- what if you meet targets but there are some large unintended negative consequences?

- do the ends justify the means? (what if you meet targets but only but doing unethical things?)

- whose values do you use?

- 3 core parts of a rubric:

- criteria (e.g., reach of a program, educational outcomes, etc.)

- levels (standards) (e.g., bad, poor, good, excellent; could also include “harmful”)

- some people don’t like to see “harmful” as a level, but e.g., when we saw inequities, we needed a way to be able to say that it was beyond poor and actually causing harm

- importance of each criteria (e.g., weighting)

- sometimes all criteria are equally important and sometimes not

- rubrics can be used to evaluate emerging strategies:

- evaluation can be used in situations of complexity to track evolving understanding

- in all systems change, there is no final “there”

- in situations of complexity, cause-and-effect are only really coherent in retrospect [this are not predictable] and do not necessarily repeat

- we only know things in hindsight and our knowledge is only partial – we must be humble

- need to be looking out continually for what emerges

- in complexity thinking, we are only starting to see what indigenous communities have long known

- our reality if created in relation, interpretive

- Western knowledge dismissed this

- need to bring things together to make sense of multiple lines of evidence

- “weaving diverse strands of evidence together” in the sensemaking process

- we have to make judgments and decisions about what to do next with limited/patchy information. Rubrics give us a traceable method to make our reasoning explicit

- having agreed on values at the start helps to navigate complexity

- break-even analysis flips return-on-investment:

- when you can’t do a full cost-benefit analysis (e.g., don’t have information on ALL costs and ALL benefits), can see if the benefits are at least greater than costs

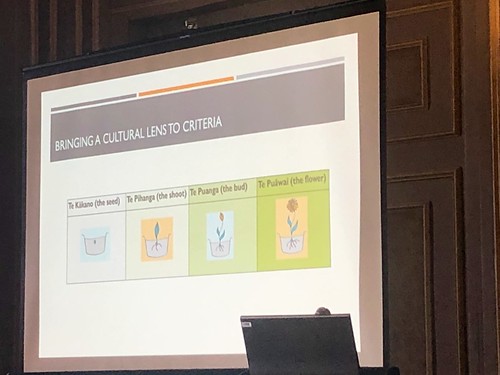

- think about how rubrics are presented – e.g., minirubrics with red/yellow/green

- but that might not be appropriate in some contexts – e.g., if a program is just developing an it’s unreasonable to expect that certain criteria would be at a good level yet

- a growing flower as a metaphor for different stages of different parts of a program may be more appropriate to a development program. May also be more appropriate in an indigenous context

- it’s important to talk about how the criteria relate to each other (not in isolation)

- they do each analysis separately (e.g., analyze the survey; analyze the interviews)

- then map that to the rubric

- then take that to the stakeholders for sensemaking; stakehodlers can help you understand why you saw what you saw (e.g., when you see what might seem like conflicting results)

- like with other evaluation stuff, might not say “we are building a rubric” to stakeholders at the start (it’s jargon). Instead, ask questions like “what is important to you?” or “If you were participating *as* Māori , what would that look/sound/feel like to you?”

Theory of Change

- to be a theory of change (TOC) requires a “causal explanation” (i.e., a logic model on its own is not a TOC – we need to talk about why those arrows would lead to those outcomes) (John Mayne) [This also came up as a question to my case competition team – and my team gave a great answer! Did I mention I’m so proud of them?]

- complexity affects the notion of causation – in complexity, there isn’t “a” cause, there are many causes (John Mayne)

- people assume you have to have a TOC that can fit on one page – but that doesn’t always work – can do nested TOCs (John Mayne)

- interventions are aimed at changing the behaviour of groups/institutions, so TOCs should reflect that (John Mayne)

- there is lots of research on behaviour change, such as on Bennett’s hierarchy, or the COM-B model (John Mayne):

- causal link assumptions – what conditions are needed for that link to work? (John Mayne) (e.g., could label the arrows on a logic model with these assumptions – Andrew Koleros)

Follow-Ups

As with pretty much any conference I go to, I came home with a reading list:

- The Reflective Practitioner: How Professionals Think In Action

- Evaluation Foundations Revisited: Cultivating a Life of the Mind for Practice

- The Boat People

. Not about evaluation per se, but the author of this book was a plenary speaker and she piqued my interest in her book!

And some to dos:

- re-read the TRC Calls to Action and figure out which things I can take action on! And then take action!

- try writing “the Morning Pages”

- listen to the audiorecorded reflections that I made during the conference and document any insights I want to capture

- read all the books on the above list!

Sessions I Attended

- Workshop: Reflective Practice: The Bridge to Innovation by Gail Barrington

- Opening Keynote: Making Change – Perspective from Policy Advocacy and Implementation by Susanna Fuller

- Concurrent session: Steering Evaluation Through Uncharted Waters by M. Elizabeth Snow, Alec Balasescu, Abdul Kadernani, Sheila Matano, Stephanie Parent, Monika Viktorin, Shadi Mahmoodi, Sandra Wu [this was my presentation!]

- Concurrent session: Technology and Trust: Navigating Through the Business Case for Prosperity by Jillian Baker, Yasir Dildar, Carl Asuncion

- Concurrent session: Using data and systems thinking to build bridges in a large complex public sector organization by Gord Lucke

- Keynote Panel: The Next Generation of Theory-Based Evaluation by Andrew Koleros, John Mayne, Sebastian Lemire

- Concurrent session: Navigating beyond projects and programs: Lessons learned conducting strategy-level evaluations by Heather Smith Fowler, Jacey Payne, Julie Rodier

- Concurrent session: Much more than a pretty picture: Using visual models in collaborative evaluation by Barbara Szijarto

- Concurrent session: The Evolution of Infoway’s Measurement and Evaluation Strategy by Bobby Gheorghiu, Simon Hagens

- Concurrent session: Key lessons learned during the application of the realist approach: An example of a realist review and evaluation of violence prevention education in British Columbia healthcare by Sharon Provost, Michelle Naimi

- Concurrent session: Rubrics: the bridge that connects evidence and explicit evaluative reasoning by Kate McKegg, Nan Wehipeihana, Julian King, Judy Oakden, Adrian Field, Louise Were

- Lightning Roundtable: Tales of Inspiration: Use of Storytelling for Celebration, Learning, and Change by Tammy Horne

- Lightning Roundtable: Connecting to Reconciliation through our Profession and Practice by Larry Bremner, Nicole Bowman, Andrealisa Belzer

- Lightning Roundtable: Bridge Over Troubled Water: Communication Strategies to Influence Action on Undesirable Evaluation Results by Erica McDiarmid

- Closing Keynote: Bridges to Empathy by Sharon Bala

- Concurrent session: Evaluators as change agents in steering the bridge to realize the TRC Calls to Action using our Situational Practice Competency 3.7 by Keiko Kuji-Shikatani, Martha McGuire, Larry Bremner, Linda Lee

Pingback: Evaluator Competencies Series: Contributing to the Evaluation Profession | Dr. Beth Snow

What a wonderful summary here, Dr. Snow. I just landed on this one this morning and I’m wondering why I had not seen it earlier. It is ‘detailed’ and gives a clear picture of the Conference, such that even those who did not attend, will not feel like they missed anything. Good work!