The Canadian Evaluation Society’s national conference was held right here in Vancouver last month! I was one of the program co-chairs for the conference and I have to say that it was pretty awesome to see a year and a half worth’s of work by the organizing committee come to fruition! There were a lot of people involved in putting together the conference and so many more parts to it than I had realized when I started working on it and it was incredible to see everything work so smoothly!

As I usually do at conferences, I took a tonne of notes, but for this blog posting I’m going to summarize some of my insights, by topic (in alphabetical order) rather than by session 1Though I’ve listed all the sessions I attended at the bottom of this posting. as I went to some different sessions that covered similar things. Where possible, I’ve included the names of people who said the brilliant things that I took note of, because I think it is important to give credit where credit is due, but I apologize in advance if my paraphrasing of what people said is not as elegant as the way that people actually said them.

Context

- Damien Contandriopoulos noted that context is often defined by what it is not – it is not your intervention – i.e., it’s whatever is outside your intervention, but it’s not the entire universe outside of your intervention. Just what is close enough to be relevant/important to the analysis. He also noted that some disciplines don’t talk about context at all (e.g., they might talk about the culture in which an intervention occurs, but don’t talk about it as separate from the intervention the way we talk about context as being separate from the intervention).

- Depending on your conceptualization of “context”, you may want to:

- neutralize the context (e.g., those who think that context “gets in the way” and thus they try to measure it and neutralize it so it won’t “interfere” with your results). Contandriopoulos clearly didn’t favour this approach, but noted that it could work if your evaluand was very concrete/clear.

- adapt to context

- describe the context

- In all of the above options, it’s about generalizability/external validity (e.g., if you are trying to neutralize the context, you are wanting to know if the evaluand works and don’t want the context to interfere with your conclusion about if the the evaluand works; if you are adapting to the context, you want to figure out how the evaluand might work in a given context; if you are describing the context, you are wanting to understand the context to use to interpret your evaluation findings)

- From the audience, AEA president Kathryn Newcomer, mentioned a paper by Nancy Cartwright about transferability of findings 2She didn’t say the name of the paper or the journal, but based on her comments about the paper, I believe it is likely this paper. Unfortunately, it’s behind a paywall, so I can’t read more than the abstract., specifically about how Cartwright talks about “support factors” rather than context. Further, she talked about how in the US there is lots of interesting in “scaling up” interventions, but rarely do studies document the support factors that allow an intervention to work (e.g., you need to have a pool of highly qualified teachers in the area for program X to work). She suggested:

- putting the support factors into the theory of change

- considering: how do we know if the support factors are necessary or sufficient? What if you need a combination of factors that need to be present at the same time and in certain amounts for the program to work? etc.

- Contandriopoulos mentioned that sometimes people just list “facilitators” and “barriers” as if that’s enough [but I liked Newcomer’s suggestion that “support factors” (or barriers, though she didn’t mention it) could be integrated into the theory of change]

Evaluation

- Kas Aruskevich showed an imagine of a river in Alaska viewed from above and noted that if you were standing by the side of that river, you’d never know what the sources of that river are (as they are blocked by mountains) and she likened evaluation to taking that perspective from a distance where you look at the whole picture. I liked this analogy.

- Kathy Robrigado talked about how the accountability function of evaluation is often seen as an antagonist to learning, but she sees it as a jumping off point for learning.

- In summarizing the Leading Edge panel, E. Jane Davidson had a few things to say that were very insightful in relation to thinking I’ve been doing lately with my team about what evaluation is (and how it compares/relates to other disciplines that aim to assess program/projects/etc.). With respect to monitoring, she noted that people often expect key performance indicators (KPIs) to be an answer, but they aren’t. Often what’s the easiest to measure is not what’s most important. In evaluation, we need to think about what’s most important (not just what’s strong or weak, but what really matters).

Evaluation, History of the Field

- Every time I go to a evaluation conference, someone gives a bit of a history of the field of evaluation from their perspective (perhaps once day I’ll compile them all into a timeline). This conference was no different, with closing keynote speaker Kylie Hutchison talking about what she has seen as “innovations” in evaluation that had a lot of buzz around them and then eventually settled into an appropriate place [her description made me think of the “hype cycle“, which someone had coincidentally shown in one of the sessions that I was in]:

- 1990s – logic models

- 2000s – the big RCT debate (i.e., are RCTs really the “best” way to evaluate in all class)

- social return on investment (SROI), Appreciative Inquiry

- developmental evaluation, systems approaches

- deliverology

Evaluators, Role of

- Lyn Shulha noted that as an evaluator, you’ll never have the same context/working conditions from one evaluation to the next, and you’ll never have a “final” practice or theory – they will continue to change.

- Kathy Robrigado talked about starting an evaluation as an “evaluator as critical friend” (e.g., asking provocative questions to understand the program/context, offering critiques of a person’s work, providing data to be examined through another lens). But after awhile, they found this approach to be too resource intensive, as they had ~60 programs to deal with and data collection was cumbersome; they moved from critical friend to “strategic acquaintance” (or, as she put it, “we had to friendzone the programs”)

- Michel Laurendeau stated that “evaluators are the experts in interpreting monitoring data” as what you see when you look at the data isn’t necessarily what is really going on [this reminded me of something that was discussed at last year’s CES conference: what the data says vs. what the data means]

- Kylie Hutchison talked about how many evaluators are talking about the evaluator as a social change agent. People gravitate to this profession because they want to be involved in social change – maybe they are a data geek, but they see how the data can lead to social change. She also talked about how many skills she has needed to build to support her evaluation practice: in grad school she focused on methods and statistics, but when she went on to become a consultant she didn’t find that she needed advanced statistics – she needed skills in facilitation, then data visualization, and now organizational development.

Knowledge Translation

- Kim van der Woerd described getting knowledge into action as “the long journey from the head to the heart”. I really like this phrase, as just knowing something (with the head) doesn’t necessarily mean we take it to heart and put it into action. I wonder how thinking about how we can get things from the head to the heart could help us think about better ways to promote the translation of knowledge into action.

Learning

- Lyn Shulha talked about learning spirals – as we travel from novice to expert, we can imagine ourselves descending down, say, a spiral staircase. As a given point, we can be at the same place as earlier, but deeper (as well, we are changed from when we were last at this point). She noted that we “need to hold onto our experiences and our truths lightly”, lest we end up traveling linearly rather than in a spiral.

Logic Models

- One of the sessions I was in generated an interesting discussion about different ways that people use logic models, such as:

- having the lead agency of a program create a logic model of how they think the program works and then having all the agencies operating the program create logic models of how they think the program works and then compare – if they have different views of how the program works, this can generate important discussions

- calling the first version of the logic model “strawman #1” to emphasize that the logic model is meant to be challenging and changed.

Reporting

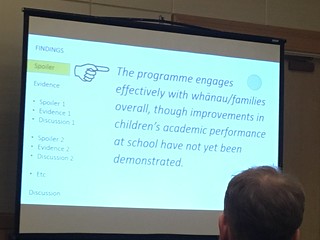

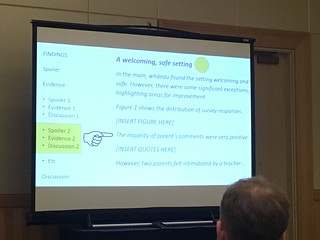

- Report structure recommended by Julian King in the Leading Edge panel on Rubrics:

- E. Jane Davidson noted that in social sciences, people are often taught how to break things down, but not how to pack it back together again to answer the big picture question. For example, you’ll often see people report the quantitative results, then the qualitative results, but with no actual mixing of the data (so it’s not really “mixed methods” – it’s more just “both methods”).

- Also from E. Jane Davidson – the length of a section of a report is typically proportional to how long it took you to do the work (which is why literature reviews are so long), but that’s not what’s most useful to the reader. It’s like we feel we have to put the reader through the same pain we went through to do the work; we want them to know we did so much work! And then they get to the end and we say “the results may or may not be…. and more research is needed.” Not helpful! Spoilers really are key in evaluation reporting – write it like a headline. Pique their interest in the spoiler and then they want to read the evidence (how did they decide that??

- 7 +/- 2 key evaluation questions (KEQ):

- executive summary: KEQ 1, answer + brief evidence; KEQ 2, answer + brief evidence; KEQ 3, answer + brief evidence

- and make sure your recommendations are actionable!

- 7 +/- 2 key evaluation questions (KEQ):

Rubrics

- The Leading Edge Panel on Rubrics was easily my favourite session of the conference. I’ve done a bit of reading about rubrics after going to a session on them at the Australasian Evaluation Society conference in Perth, but found that this panel really brought the ideas to life for me.

- Kate McKegg mentioned that she asked a group of people in healthcare if they thought that their organizations key performance indicators (KPIs) reflected the value of what their organization does, and not a single person raised their hand [This resonated with me, as my team and I have been doing a lot of work lately on differentiating, among other thing monitoring and evaluation.]

- Rubrics:

- can help clarify what matters and include those things in your evaluation

- are made of:

- evaluative criteria – to come up with these, can check out the literature, talk to experts, talk to stakeholders (e.g., people on the front lines); can also think about what would be appropriate for the cultural context (e.g., what would make a program excellent in light of the cultural context?)

- levels of importance (of the criteria) – remember, things that are easy to measure are not necessarily what’s important

- rating scale (how to determine the level of performance (e.g., excellent-very good-good-adequate-emerging-not yet emerging-poor); depending on your context, you may choose different words (e.g., may use “thriving” instead of “excellent”)

- can be:

- analytic – describe the various performance levels for each criterion

- holistic – a broad level of description of performance at each level (e.g., describe “excellent” overall (encompassing all the criteria) rather than describing “excellent” for each criterion individually)

- analytic can provide more clarity, but require more data

- You should be able to see your theory of change in the rubric. Key evaluation questions (KEQ) often follow the theory of change (e.g., KEQs might be “how well are we implementing?” or “how well are we achieving outcome #1?” Think about the causal links in the theory of change. If there is a deal breaker, it should show up in the theory of change.). Think about the causal links and their strength.

- You can embed cultural values into the process (e.g., for the Maori, the word “rubric” didn’t resonate, so Nan Wehipeihana used a cultural metaphor that did; rather than words like “poor” and “excellent”, can use words that fit better like a “seed with latent potential” and “blooming” and “coming to fruition”)

- Values are the basis for criteria – they reflect what is valued (and whose values hold sway matters)

- Once you have a rubric, you need to collect data to “grade” the program using the rubric; data may come from all sorts of places (e.g., previous research, administrative data, photos from the program, interviews/surveys/focus groups)

- Can make a table of each criteria and data source and use that to optimize your data collection:

| Admin Data | Interview Staff | Interview Participants | Photos from the Program | |

| Criterion 1 | x | x | ||

| Criterion 2 | x | x | x | |

| Criterion 3 | x | x | ||

| Criterion 4 | x | x | ||

| Criterion 5 | x | x | x |

- Then you can look at all the things you want to collect from each data source (e.g., you can ask about criteria 2, 4, and 5 in interviews with staff; look for criteria 1, 2, and 3 in the photos from the program) = integrated data collection

- Make sure that the data collection is designed to answer the evaluation questions.

- Look to see if you are getting consistent information (i.e., saturation) or if the data is patchy or inconsistent and you need to get more clarity.

- Bring data to stakeholders as you go along (especially for long evaluations – they don’t want to wait until the end of 3 years to find out how things are going!)

- 3 steps to making sense of data:

- analysis – breaking something down into its component parts and examining each part separately (King et al, 2013)

- synthesis – putting together “a complex whole made up of a number of parts or elements ” (OED online); assembling the different sources of data. Sometimes when you are working on data synthesis, you learn that what’s important isn’t what you initially thought was important (so you need to rejig your rubric). Also think about what the deal breakers are (e.g., if no one shows up to the program…)

- sensemaking: helps to clarify things; one way to do this is to get all the stakeholders together, give them the synthesized data (a rough cut), and go through a process like this:

- generalization: In general, I noticed…

- exception: In general…, except….

- contradiction: On one hand…, but ont he other hand…

- surprise: I was surprised by…

- puzzle: I wonder…

- When you think about the exceptions or contradictions – how big of a deal are they? Are they deal breakers?

- As stakeholders do this, they start to understand the data and to own the evaluation. Often they make harder judgments than the evaluator might have.

- Typically, they do the synthesis and bring that to the stakeholders to do sensemaking; but don’t spend a lot of time making the synthesized data looked polished/finished – it should look rough as it is to be worked with. Not everyone will spend time reading the data synthesis in advance, so give them time to do that at the start of the session.

- Put up the rubric and have the stakeholders grade the program.

- Often people try to do analysis, synthesis, and sensemaking all at the same time, but you should do them separately.

- Rubrics “aren’t just a method – they change the whole fabric of your evaluation”. They can help you “mix” methods (rather than just doing “both”) methods – they can help you make sense of the “constellation of evidence”).

- I asked how do they deal with situations that are dynamic? Their answer was the rubrics can evolve, especially with an innovative program. You create it based on what you imagine the outcome will be, but other things can emerge from the program. You can start with a high level rubric (don’t want to get too detailed or overspecified that you paint yourself into a corner). You need it to be underspecified enough to be able to contextualize it to the setting. It’s like the concept of “implementation fidelity” – implementing something exactly as specific is not the best – you should be implementing enough of the intent in a way that will work in the setting.

- Another audience member asked how would you determine if a rubric is valid/reliable? The speakers noted that often people ask “is it a valid tool?” meaning “was it compared to a gold standard /previously validated tool”? But those other tools are often too narrow/miss the mark. The speakers suggested that “construct validity is the mother of all validities” – the most important question is “is it useful for the people for whom it was built?”

- Another audience member asked about “scaling up” rubrics. The speakers noted examples where they had worked on projects to create rubrics to be used across a broader group than those who created it – e.g., created by the Ministry of Education to be used by many different schools with the help of a facilitator. For these, you need to have a lot more detail/instructions on how to use it (and a good facilitator) since users won’t have the shared understanding that comes from having created it. They have also done “skinny rubrics” to be used by lots of different types of schools (so had to be underspecified), but again, need to provide lots of support to users.

Systems Thinking

- Systems archetypes are common patterns that emerge in systems. This was a concept that was brought up by an audience member in my session on complexity, and is something I want to read more about!

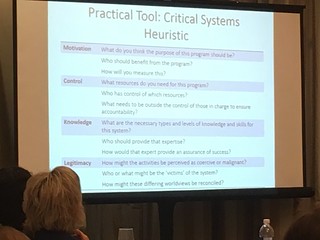

- Heather Codd talked about three key concepts in using systems thinking (using Donella Meadow’s definition of a system as something with parts, links between parts, and a boundary) in evaluation:

- interrelationships – understanding the interrelationships and what drives them helps us to understand what’s going on with the program (and she suggested using rich pictures to help focus the evaluation and think about what the consequences of the program might be)

- boundaries – we need to pick a boundary for the purpose of analysis, but note that it is sensitive because it defines what is in and out of the evaluation. She suggested using critical system heuristics to help describe the program, scope the evaluation, and decide on an evaluation approach)”

- multiple perspectives – what are the world views being applied and what the implications of those world views? She suggested you can do a stakeholder analysis, but also a stake analysis; she also suggested “framing” by using an idea from Bob Williams, where you add the words “something to do with…” in front of ideas (e.g., “Something to do with a culture of health”, “something to do with managing heart disease”; this tool can help give you a sense of the intervention’s purpose and the evaluation’s purpose.

- Evaluators are an element in a system and we cannot separate out our effect on the systems [This made me think of “co-evolution” – the evaluation co-evolves along with the rest of the system]

- There are echoes in a system of what has happened before [e.g., intergenerational trauma]

Truth & Reconciliation

- Last year, the CES took a position on reconciliation in Canada. Several of the speakers at the conference talked about this topic. For example, Kim van der Woerd talked about a witness as being one who listens with their whole heart and validates a message by sharing it (and that they have a responsibility to share it). She also noted that the Truth and Reconciliation Commission (TRC) wasn’t Canada’s first attempt at trying to build a good relationship between Aboriginal and non-Aboriginal people – the Royal Commission on Aboriginal People put out a report with recommendations in 1996. But when it was evaluated in 2006, Canada received a failing grade with 76% of the 400+ recommendations being not done and with no significant process. She noted that we shouldn’t wait 10 years before we evaluate how well Canada is doing on the TRC recommendations.

- Paul Lacerte outlined a set of recommendations:

- amplify the new narrative (where the old narrative was “the federal government takes care of the natives”)

- conduct research & develop a reconciliation framework

- set targets for recruiting and training indigenous evaluators

- learn about and follow protocol (e.g., how to start a meeting, gift giving)

- put up a sign in your workspace about the traditional territory on which you are working

- volunteer for an indigenous non-profit

- join the Moose Hide Campaign

- At the start of her closing keynote, Kylie Hutchison acknowledge that she was speaking on the unceded traditional territory of the Musqueam, Squamish, and Tsleil-Waututh First Nations. And then she said that she’d never said that before speaking before but that she would be now. And I thought that it was a really cool think to witness someone learning something new and putting it into practice like that, especially something so meaningful.

Misc:

- The best joke I heard in a presentation was when Kathy Robrigado, after a few acronym-filled sentences in her presentation, said, “As you know, government employees are paid by the number of acronyms they use”

To Dos:

- read more about systems archetypes

- read more about critical systems heuristics

- check out Jane Davidson’s website: realevaluation.com

- check out Better Evaluation’s page on rubrics

Sessions I Attended:

-

Opening Keynote by Kim van der Woerd and Paul Lacerte

-

Short presentation: Causing Chaos: Complexity, theory of change, and developmental evaluation in an innovation institute by Darly Dash, Hilary Dunn, Susan Brown, Tanya Darisi, Celia Laur Cypress

-

Short presentation: Implications of complexity thinking on planning an evaluation of a system transformation by M. Elizabeth Snow, Joyce Cheng [This was one of my own presentations!]

-

Short presentation: Cycles of Learning: Considering the Process and Product of the Canadian Journal of Program Evaluation Special Issue by Michelle Searle, Cheryl Poth, Jennifer Greene, Lyn Shulha

-

Short presentation: Using System Mapping as an Evaluation Tool for Sustainability by Kas Aruskevich

-

Incorporating influence beyond academia data into performance measurement and evaluation projects by Christopher Manuel

-

Exploring Innovative Methods for Monitoring Access to Justice Indicators by Yvon Dandurand, Jessica Jahn

-

A Quasi-Experimental, Longitudinal Study of the Effects of Primary School Readiness Interventions by Andres Gouldsborough

-

What Would Happen If…? A Reflection on Methodological Choices for a Gendered Program by Jane Whynot, Amanda McIntyre, Janice Remai

-

Towards Strategic Accountability: From Programs to Systems by Kathy Robrigado

-

Getting comfortable with complexity: a network analysis approach to program logic and evaluation design by John Burrett

-

Communication in System Level Initiatives: A grounded theory study by Dorothy Pinto

-

Seeing the Bigger Picture: How to Integrate Systems Thinking Approaches into Evaluation Practice by Heather Codd

-

Understanding and Measuring Context: What? Why? and How? by Damien Contandriopoulos

-

A Graphic Designer, an Evaluator, and a Computer Scientist Walk into a Bar: Interdisciplinary for Innovation by M. Elizabeth Snow, Nancy Snow, Daniel J. Gillis [This was another one of my presentations and hands down the best presentation title I’ve ever had]

-

Big Bang, or Big Bust? The Role of Theory and Causation in the Big Data Revolution by Sebastian Lemire, Steffen Bohni Nielsen Seymour

-

Using Web Analytics for Program Evaluation – New Tools for Evaluating Government Services in the Digital Age at Economic and Social Development Canada by Lisa Comeau, Alejandro Pachon

-

The Future of Evaluation: Micro-Databases by Michel Laurendeau

-

Dylomo: Case studies from an online tool for developing interactive logic models by M. Elizabeth Snow, Nancy Snow [This was the last of my presentations]

-

Development and use of an App for Collecting Data: The Facility Engagement Initiative by Neale Smith, Graham Shaw, Chris Lovato, Craig Mitton, Jean-Louis Denis

-

Leading Edge Panel: Evaluative Rubrics – Delivering well-reasoned answers to real evaluative questions by Kate McKegg, Nan Wehipeihana, Judy Oakden, Julian King, E Jane Davidson

-

Closing Keynote by Kylie Hutchinson

Next CES Conference:

- Host: Alberta & Northwest Territory confernece

- May 26-29 – Calgary

- May 31-June 1 – Yellowknife

- Theme: Co-creation

Footnotes

| ↑1 | Though I’ve listed all the sessions I attended at the bottom of this posting. |

|---|---|

| ↑2 | She didn’t say the name of the paper or the journal, but based on her comments about the paper, I believe it is likely this paper. Unfortunately, it’s behind a paywall, so I can’t read more than the abstract. |

This is great! I just came across this blog. Love how thorough your notes are, and am happy you appreciated the joke. 😛